The Sunday Scowl: Why Essay Grading Drains ESL Teachers

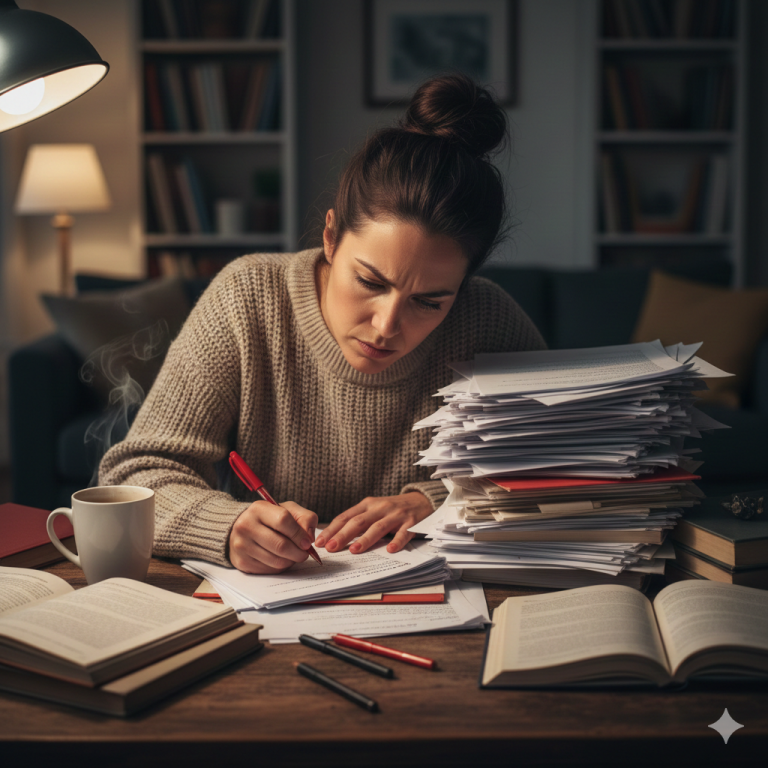

If you teach writing, you probably know the feeling.

It’s Sunday afternoon. Your coffee has gone cold. You’re halfway through a stack of essays, and somewhere around essay fifteen, your brain starts to blur.

You’re still working. You’re still trying to be fair. But the sharpness is gone.

What should feel like careful grading starts to feel like survival.

I’ve come to think of that moment as the Sunday Scowl.

Not because teachers are lazy. Not because we don’t care. Quite the opposite. It happens because writing feedback asks us to make hundreds of small judgment calls in one sitting, and that kind of work is mentally expensive. Good teachers don’t stop caring when they get tired. They just get overloaded.

Author note: I teach ESL in China and built SyllabixMark after seeing how grading fatigue affects consistency, clarity, and confidence.

The real problem isn’t effort

For a long time, I assumed the answer was to work faster or become “more efficient” at grading.

But that’s not really the issue.

The hardest part of grading writing isn’t just the time. It’s the repetition.

Reading for meaning.

Checking whether the student actually answered the question.

Noticing repeated vocabulary.

Spotting grammar patterns.

Comparing the script to a rubric.

Writing comments that are specific enough to help but short enough to be realistic.

Do that once and it’s fine.

Do it thirty times in a row, and something starts to slip.

Usually it’s not your standards. It’s your consistency.

Why fluent writing can fool us

One of the biggest traps in essay marking is that some pieces of writing sound better than they really are.

The vocabulary is confident. The sentences are smooth. The structure looks tidy. On first read, the essay feels strong.

But then you slow down and ask a harder question:

Did this student actually answer the prompt clearly and fully?

That’s where the cracks often appear.

Sometimes the argument is thin. Sometimes the conclusion doesn’t match the position. Sometimes the paragraph sounds intelligent but doesn’t really say much.

And when we’re tired, it’s easy to subconsciously fill in those gaps for the student.

That’s not a character flaw.

That’s cognitive fatigue.

The shift that changed my grading

One thing that has helped me is grading in a more deliberate order.

Instead of reading an essay holistically and deciding how it “feels,” I try to separate the dimensions. I look at response first. Then organization. Then language. Then grammar patterns.

It’s not glamorous, and it isn’t always faster at the beginning.

But it is more honest.

That one shift has made me notice how often a polished-looking essay hides weak development or an incomplete response. It has also made my feedback more specific, because I’m reacting less to the overall vibe and more to the actual writing in front of me.

Where the burnout really comes from

I don’t think teachers burn out just because they work hard.

A lot of us are willing to work hard.

What wears people down is doing high-focus, repetitive, low-leverage work for hours at a time, especially when it spills into evenings and weekends.

The emotional drain comes from knowing your students need useful feedback while also knowing your energy is fading with every script.

That gap hurts.

You want to be precise. You want to be fair. You want to give the kind of feedback that actually helps a student improve.

But after enough essays, you start to feel your own judgment getting heavier and slower. And that’s where frustration creeps in — not because you don’t know what good writing looks like, but because you’re being asked to do too much of the same mental task in one sitting.

What should be automated — and what shouldn’t

This is the reason I started thinking seriously about AI-assisted grading for teachers.

Not because I believe a machine should replace a teacher’s judgment.

It shouldn’t.

But there is a big difference between replacing judgment and reducing mechanical load.

Teachers should still decide what matters.

Teachers should still interpret the writing.

Teachers should still coach the student.

But repetitive scanning work — repeated error spotting, repeated rubric cross-checking, repeated pattern detection — is exactly the kind of thing software should help with.

That’s not lowering standards.

If anything, it protects them.

Because when the repetitive part gets lighter, teachers have more energy left for the part that actually requires expertise: diagnosis, explanation, and teaching.

A better Sunday

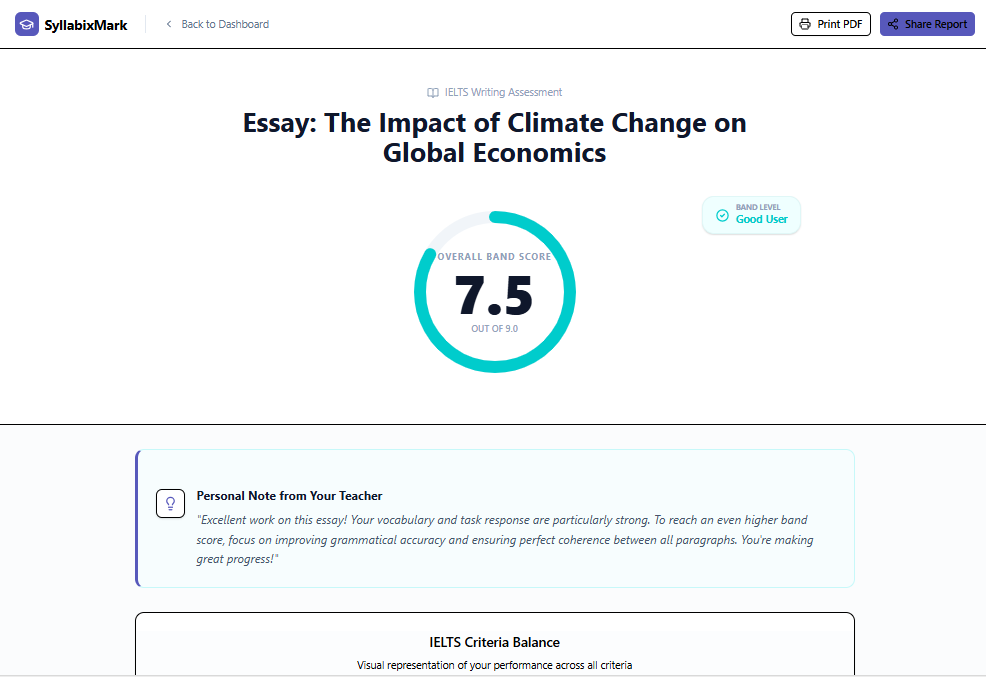

I built SyllabixMark around that idea.

The goal isn’t to remove the teacher from the process. The goal is to remove the exhausting parts that eat time without adding much value when done manually for the twentieth time in a row.

If software can help a teacher spot the likely issues faster, organize feedback more clearly, and stay more consistent across a stack of essays, that doesn’t make the teacher less important.

It makes the teacher more effective.

And maybe, just maybe, it gives them a better Sunday.

Final thought

The Sunday Scowl isn’t just about workload.

It’s about the feeling that your weekends are being consumed by work that drains your energy before it uses your expertise.

That’s the part I want to change.

Not by pretending grading is easy.

But by respecting the difference between work only a teacher can do — and work a teacher should never have had to do manually for this long.

Try SyllabixMark

If essay marking is eating your weekends, try SyllabixMark and see what changes when the mechanical scan is done for you.

Start with a few essays. Keep your own judgment. Notice what gets lighter.